How can I evaluate my cognitive automation

What is Cognitive Automation

If you ever experienced a daunting task at work that you believe should be automated in the AI era, then you already know Cognitive Automation. Cognitive automation refers to the use of AI in automating repetitive tasks and processes that are alternatively performed manually be a person or even a whole team.

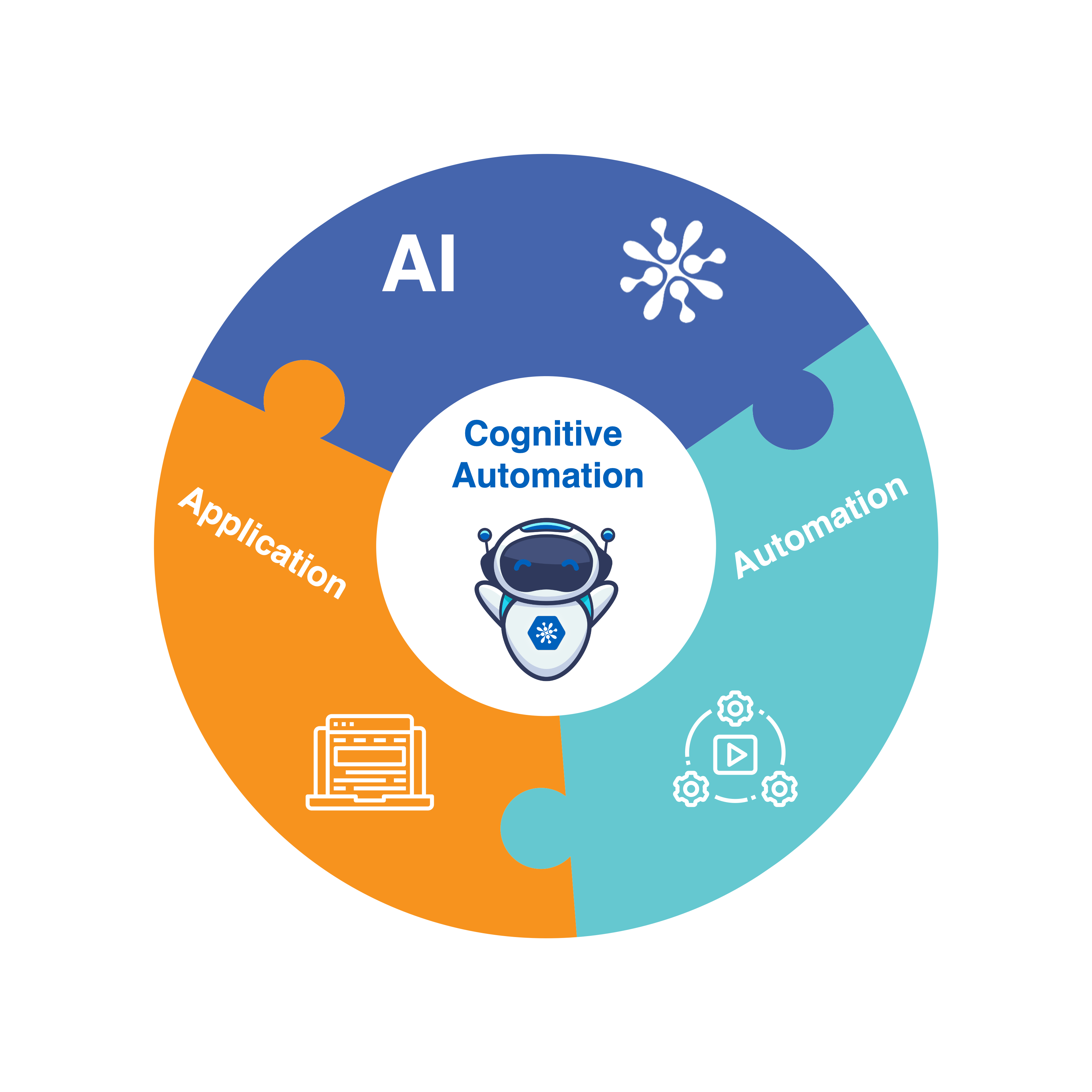

There are three component that come together to make this happen:

1. AI: To perform the core task that require human intelligence such as comprehending and analyzing text

2. Automation: To execute a task or a workflow based on the AI outcomes

3. Application: The system where this task or activity should be performed such as ERP, CRM, or a ticketing system

How To Evaluate Your Cognitive Automation Use Case

The value of cognitive automation is clear in terms of completing tasks faster, saving time, and reducing operational costs. Evaluating your use case is a great way to measure your progress towards that goal and use it as a checkpoint to refine your approach further. Hence, it is best to do the evaluation based on the three components mention above; AI performance, Automation integration, and Application setup.

Evaluating the AI Performance

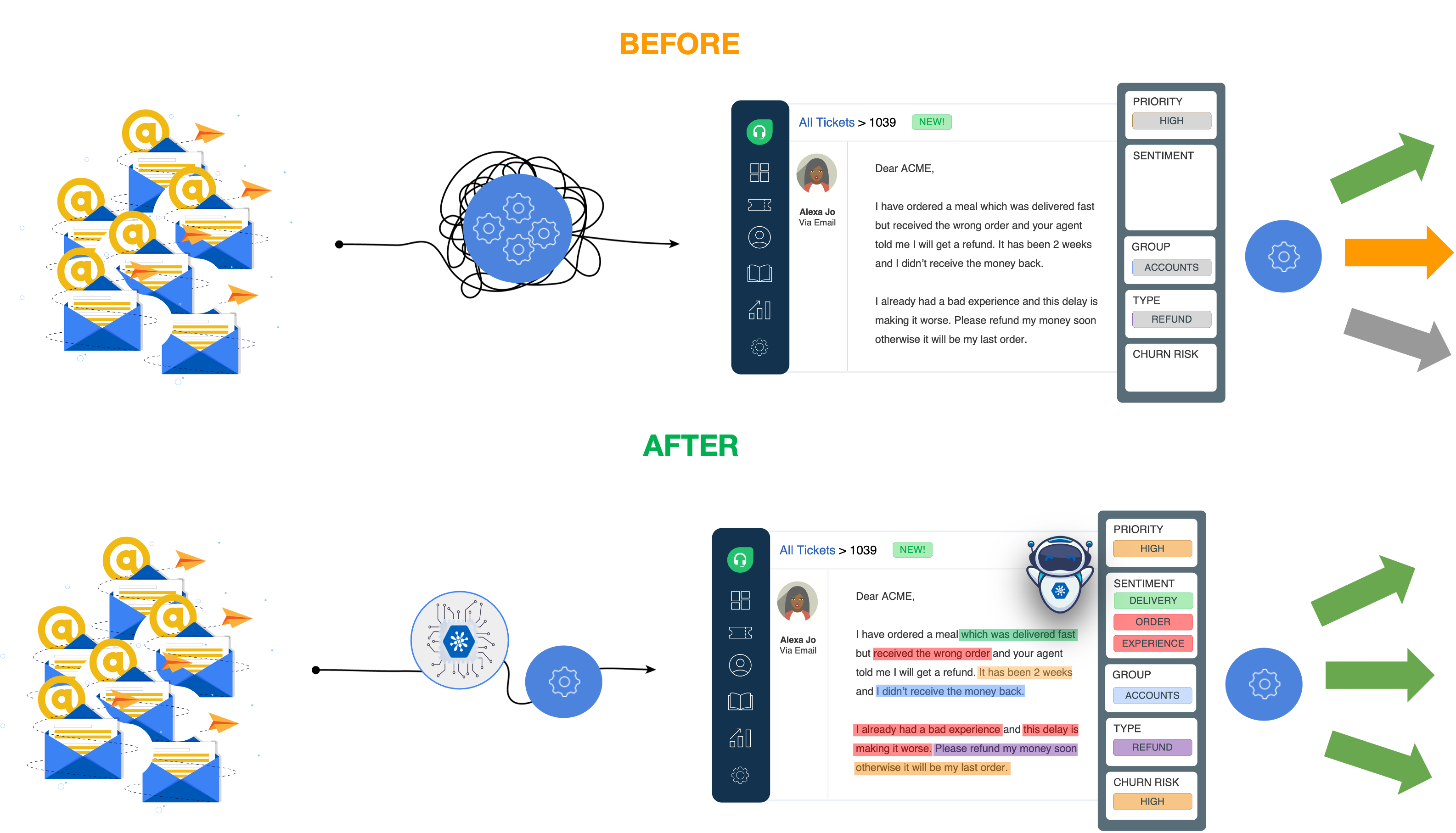

Evaluating the AI performance is the first step in your evaluation process. Let's take document classification as an example which is useful for many tasks such as customer service ticket routing. The task here is to assign the right category or label to a document like an email. The AI model would analyze the text and assign a category to it based on the text context as illustrated in the diagram below.

Evaluating the AI model requires a very pragmatic approach. This is the case for a simple reason which is that AI model performance keeps increasing with more examples just like humans. Another point that AI models have in common with humans is performing a task with decent quality by seeing a few examples but it take significantly more time and effort to build that additional 10% knowledge and become the domain expert. With DeepOpinion proprietary technology an AI model requires significantly less examples but follows a similar trend.

Therefore, the pragmatic metric of evaluation is when the AI model accuracy starts becoming useful to your application. This varies from one use case to the other. For example, at what AI accuracy would you speed up your resolution time by 70% or eliminate your mis-routed tickets by 50%. Once you reach to this point you can release a model and start realizing its value to your business process. From our experience, most applications can start realizing positive business value at a 70% accuracy. Our customer success team can work closely with you to define your go-live ready accuracy based on your application and business case.

An important question here is how do I get closer to a human-level accuracy or even a domain expert. DeepOpinion Studio is a learning system and continues to improve after going live in multiple ways. Some examples can be Active Learning where an AI model would ask you to validate its learning or by getting feedback from your users correcting its prediction on your application such as a ticketing system. This way the faster you go live the sooner your AI model can learn from new examples just like having an on-job training.

Evaluating the Automation Integration

This part is straight forward. The thing to test here is that the integration works and executes the right task on your application. For example, you should see the ticket priority field gets assigned with a priority once you activate your automation and receive a new ticket. This way you can confirm that the setups works properly and the task is executed. It is advisable to do such tests in a sandbox environment so that you do not interfere with your current setup until you are ready to go-live.

Evaluating the Application Setup

This point is specific to workflow automation. In some cases you might be performing a task manually while in others you might have a system in place that automates some of the tasks to a certain level. In this case you would ensure that the cognitive automation plays nicely with what you have in place already. For example, you might have 2 rules in place; the first one will search for the keyword "Delayed" in the body of an email and the second one will assign the priority to "Urgent". Once you integrate cognitive automation you would replace the first rule with a ticket priority classification AI model to have a better accuracy and then feed it to the second rule. In some cases, you might have a few dozen rules and it is important to configure them tightly so that your workflow can get the best of both and enhance your productivity.

We Are Here For You

Cognitive Automation is a new technology and you are one of the innovators and early adopter to harness this technology. We are here to guide you on your journey for any question or idea you might have and help you refine your cognitive automation use case. We have a shared goal here, which is to successfully implement your use case and improve your productivity. All you have to do is to get in-touch with our customer success team.